Co-Registration Now Available - At Pixel Level

Change detection algorithms are quite noisy, because they detect ALL the changes between 2 images. When imagery is not aligned, the results of change detection can be much worse, and can even result in unusable output as the two input images are no longer able to relate to each other.

With our temporal change detection, we filter out all the “normal” changes so that our customers can quickly focus on just the unusual changes they are looking for. Our Automatic Image Anomaly Detection System (AIADS) does this well by using multiple historical images to learn from. However, when the historical images don’t align with the candidate image, then the results can be improved by “shifting and stretching” the images so that they show the changes from the actual changes and not from the shifted pixels.

Let’s look at an example from this part of the world at the intersection of the Oman-Yemen-Saudi Arabia borders.

Satellite imagery taken from this unique area shows the “end of the road” for a route heading North in Oman towards the Saudi border.

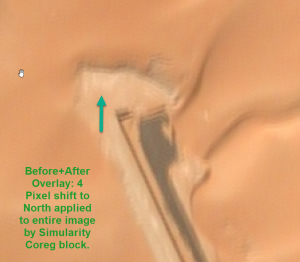

We ran these images (and some others from other dates) through our new Coreg algorithm and here is the original image and then shifted (output) image based on using the most recent image as the reference image. Can you see the difference?

You most likely can’t see any difference. However, when using highly accurate analytical algorithms such as AIADS, a pixel shift of just 3-5 pixels can result in a noisy output anomaly heatmap.

To better see the results of the Coreg algorithm, here is an image consisting of an overlay of both the original and sifted images. Now can you see the pixel shifts?

Our new Coreg algorithm is now available on Up42 and to our AIADS Enterprise License customers – contact us for details.

![]() Imagery credit: © CNES (2018), Distribution AIRBUS DS

Imagery credit: © CNES (2018), Distribution AIRBUS DS