Anomaly Detection of SAR Imagery

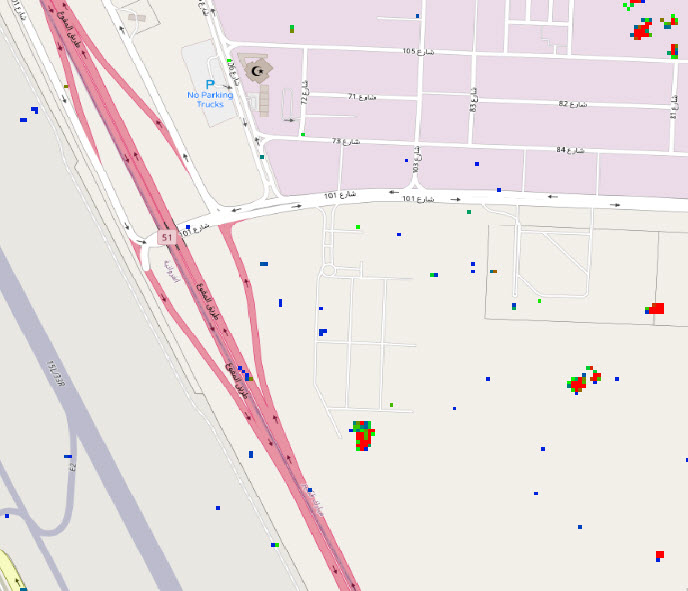

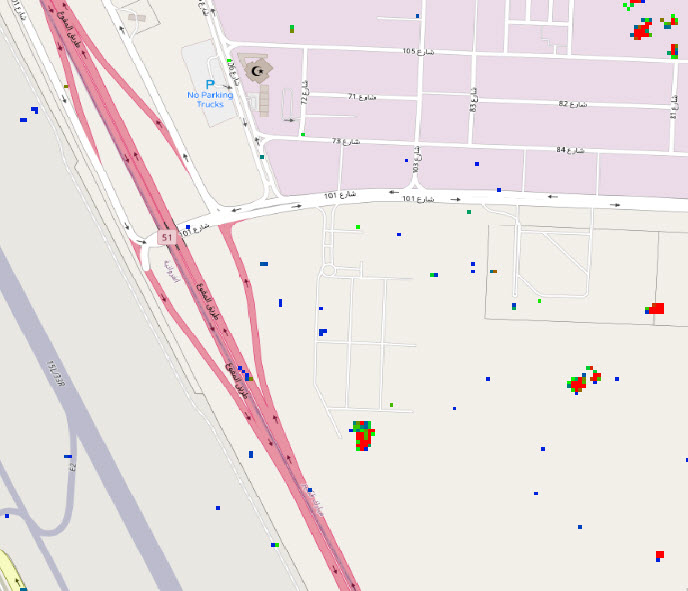

Simularity has successfully detected anomalies using SAR imagery. But first, let’s review what SAR imagery is: Synthetic Aperture Radar (SAR) is a type of radar

Simularity has successfully detected anomalies using SAR imagery. But first, let’s review what SAR imagery is: Synthetic Aperture Radar (SAR) is a type of radar

On July 14, 2022, a rooftop parking deck in Vancouver, BC collapsed, killing one person who was working in the business below. Here is the

In collaboration with the Simularity Foundation, a non-profit focusing on using technology and remote sensing data to monitor the planet, a new report has been

COPYRIGHT © 2020 SIMULARITY INC. ALL RIGHTS RESERVED. | Legal Policies | Privacy Policy